Challenge

Following a Request for Proposals and the subsequent selection of 10 grantees to receive support for professional development programs—under the banner of the “Professional Development Initiative” (PDI)— the Jim Joseph Foundation commissioned Rosov Consulting to lead a long-term and far-reaching evaluation effort. The Foundation wanted to know about the individuals enrolling in these programs, how their profiles differed depending on the program in which they participated, their motivations for enrolling, and their experience and outcomes after the program.

Beyond understanding the participants, the Foundation wanted Rosov Consulting to design a single instrument to evaluate a shared set of outcomes across all 10 of the grantees. This would enable the Foundation to gain a deeper understanding of the ways people grew as professionals and advanced their careers as a result of participating in the programs.

With so many programs being evaluated, Rosov Consulting had to navigate the myriad goals and foci of each. Complicating matters further, the programs varied widely in their intensity; some programs were two years long, while others were as short as a week or a month. Figuring out a set of measures that applied to all of them was a major undertaking.

Smoothing a path forward, Rosov Consulting, along with its role as evaluator, fostered group cohesion by also leading a Community of Practice among the heads of each PD program. This professional structure helped build relationships among members of the group, deepened their readiness to work together, and strengthened their willingness to identify commonalities even as different programs cultivated specific skills for their distinct audiences.

Approach

To gather the information the Foundation sought, Rosov Consulting deployed a mix of approaches. This included a survey of participants at the start of their programs (termed a “participant audit”). The audit involved building a picture of participants’ personal and professional profiles, their prior experiences in Jewish education, their motivations for participating in the programs, and what they aspired to accomplish with the audiences with which they worked.

Then, every 12 months, over a period of four years, Rosov Consulting conducted “clinical interviews” with the same subsample of 30 participants, three from each of the 10 grantee programs. These interviews explored motivations for participating in the programs, what they experienced during the time they took part, what they gained from these experiences, in what ways they translated their learning into their organizations, and, finally, what program alumni perceive to have been the impact of these experiences on the trajectory of their professional careers. The interviews contributed an important qualitative, longitudinal dimension to the work.

Rosov Consulting also developed a process for identifying outcomes that all 10 organizations would feel comfortable saying they seek to achieve. This effort included focus groups with program participants and conversations with program directors to sharpen understanding of each program’s primary goals. At one of the yearly Community of Practice in-person gatherings (pre-pandemic), a focal point of the programming was the search for agreement around these outcomes. The “Shared Outcomes Survey” we then developed was built upon what came out of these discussions.

Throughout the evaluation, Rosov Consulting and the Foundation stayed in frequent contact and understood that this work would have to be an iterative process. As learnings came in, and as Rosov Consulting interacted more with the participants and the programs, both parties understood which parts of the initial work plan made sense to pursue and which needed to be adapted. This included conducting unanticipated research on how certain programs adapted during the pandemic and modifying the plan to write up case studies of individual programs.

Results

The evaluation work resulted in key learnings and a shared set of outcomes, along with greater appreciation at the Foundation regarding the kinds of large-scale evaluations it could pursue; the Foundation’s ambition was significantly broadened in this respect. At the end of Rosov Consulting’s work, the research team reflected on learnings about the features of high-quality professional development programs. The team identified five particular design tensions that high-quality PD programs must engage:

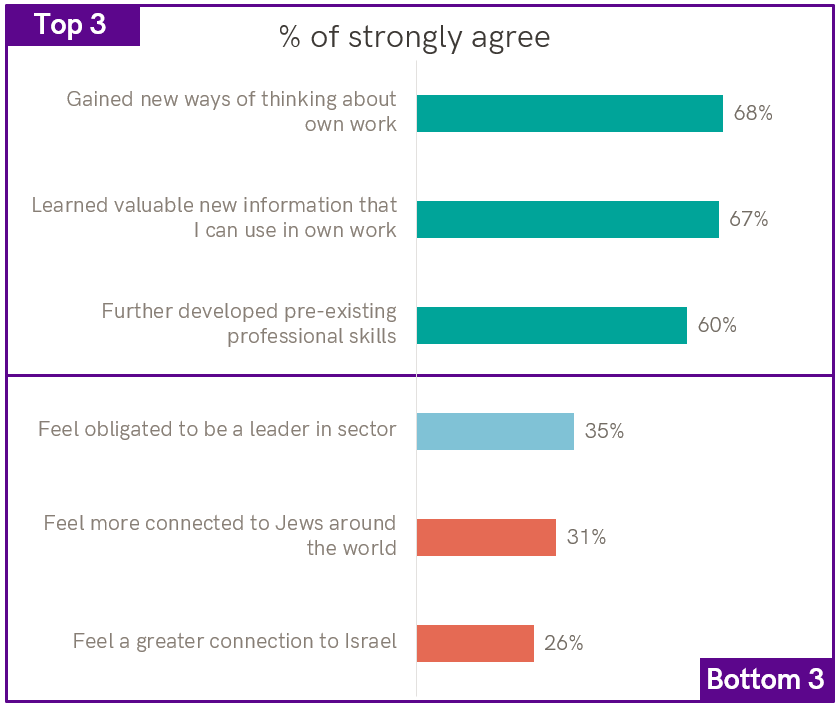

The evaluation also detailed important learnings about the outcomes created by the 10 participating programs:

- Shared Outcomes Survey data indicated that, overall, the programs helped participants become much more knowledgeable about and more accomplished in performing the professional tasks for which they are responsible—called “ways of thinking and doing.”

- Clinical interview data indicated that these professional outcomes have been quite durable, although with the passage of time interviewees found it increasingly difficult to draw causal links between what they knew and could do and what they gained from their programs.

- The Shared Outcomes Survey data also showed that, taken together, the programs socialized participants into professional communities that the participants very much valued. Again, interview data depicted how important these communities were, especially with the outbreak of the COVID-19 pandemic, and how, in the words of one interviewee, “relationships have become partnerships.”

- Finally, survey data revealed the degree to which program participants who started out with less-intensive Jewish backgrounds had an opportunity to grow and feel more confident as Jewish educators.

Lastly, evaluation instruments that tracked the trajectories of the 10 programs—especially the Participant Audit and the Shared Outcomes Survey—have now informed other field-wide work, such as CASJE’s On the Journey survey (supported in part by the Foundation) and Rosov Consulting’s work evaluating investments in the Foundation’s “Exceptional Jewish Leaders and Educators” grant category.